Network Bonding is creation of a single bonded interface by combining 2 or more Ethernet interfaces. Network bonding in linux helps in high availability of your network interface and offers performance improvement within the Operating System. Bonding is same as port teaming.

Bonding also allows you to create multi-gigabit pipes to transport traffic through the highest traffic areas of your network. For example, you can aggregate three megabits ports into a three-megabits trunk port. That is equivalent with having one interface with three megabytes speed.

Step 1.

Create the file ifcfg-bond0 with the IP address, netmask and gateway. Shown below is my test bonding config file.

#vi /etc/sysconfig/network-scripts/ifcfg-bond0

As an example , we are assuming our ip address is 10.185.15.7

Append the following lines:-

DEVICE=bond0 IPADDR=10.185.15.7 NETMASK=255.255.255.252 GATEWAY=10.185.15.1 USERCTL=no BOOTPROTO=none ONBOOT=yes

Step 2.

Modify eth0, eth1 and eth2 configuration as shown below. Comment out, or remove the ip address, netmask, gateway from each one of these files, since settings should only come from the ifcfg-bond0 file above. Make sure you add the MASTER and SLAVE configuration in these files.

Eth0 file

# vi /etc/sysconfig/network-scripts/ifcfg-eth0

Append the following lines:-

DEVICE=eth0 BOOTPROTO=none ONBOOT=no MASTER=bond0 SLAVE=yes

Eth1 file

# vi /etc/sysconfig/network-scripts/ifcfg-eth1

Append/Modify the following lines:-

DEVICE=eth1 BOOTPROTO=none ONBOOT=no USERCTL=no MASTER=bond0 SLAVE=yes

Step 3.

Set the parameters for bond0 bonding kernel module. Select the network bonding mode based on you need, The modes are

Mode 0 (balance-rr)

This mode transmits packets in a sequential order from the first available slave through the last. If two real interfaces are slaves in the bond and two packets arrive destined out of the bonded interface the first will be transmitted on the first slave and the second frame will be transmitted on the second slave. The third packet will be sent on the first and so on. This provides load balancing and fault tolerance.

Mode 1 (active-backup)

This mode places one of the interfaces into a backup state and will only make it active if the link is lost by the active interface. Only one slave in the bond is active at an instance of time. A different slave becomes active only when the active slave fails. This mode provides fault tolerance.

Mode 2 (balance-xor)

Transmits based on XOR formula. (Source MAC address is XOR’d with destination MAC address) modula slave count. This selects the same slave for each destination MAC address and provides load balancing and fault tolerance.

Mode 3 (broadcast)

This mode transmits everything on all slave interfaces. This mode is least used (only for specific purpose) and provides only fault tolerance.

Mode 4 (802.3ad)

This mode is known as Dynamic Link Aggregation mode. It creates aggregation groups that share the same speed and duplex settings. This mode requires a switch that supports IEEE 802.3ad Dynamic link.

Mode 5 (balance-tlb)

This is called as Adaptive transmit load balancing. The outgoing traffic is distributed according to the current load and queue on each slave interface. Incoming traffic is received by the current slave.

Mode 6 (balance-alb)

This is Adaptive load balancing mode. This includes balance-tlb + receive load balancing (rlb) for IPV4 traffic. The receive load balancing is achieved by ARP negotiation. The bonding driver intercepts the ARP Replies sent by the server on their way out and overwrites the src hw address with the unique hw address of one of the slaves in the bond such that different clients use different hardware addresses for the server.

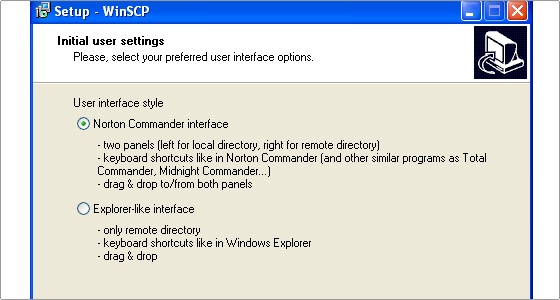

#vi /etc/modprobe.conf

Add the following lines to /etc/modprobe.conf

alias bond0 bonding options bond0 mode=1 miimon=100

Step 4.

Load the bond driver module from the command prompt.

# modprobe bonding

Step 5.

Restart the network, or restart the computer.

#service network restart

Or

# /etc/init.d/network restart

When the machine boots up check the proc settings.

#cat /proc/net/bonding/bond0

You will see OUTPUT something like below:-

Bonding Mode: adaptive load balancing Primary Slave: None Currently Active Slave: eth2 MII Status: up MII Polling Interval (ms): 100 Up Delay (ms): 0 Down Delay (ms): 0 Slave Interface: eth2 MII Status: up Link Failure Count: 0 - - - - - - - - - - - - - - - Look at ifconfig -a and check that your bond0 interface is active. To verify whether the failover bonding works.. Do an ifdown eth0 and check /proc/net/bonding/bond0 and check the “Current Active slave”. Do a continuous ping to the bond0 ipaddress from a different machine and do a ifdown the active interface. The ping should not break.